DICOM Annotation Case Study: 75,000 Studies for Diagnostic AI | Taskmonk

How Taskmonk Helped a Leading Teleradiology Provider Annotate 75,000 Studies and Accelerate Diagnostic AI Using DICOM Annotation

Industry: Healthcare / Teleradiology

Use Case: AI-Assisted Diagnostic Imaging & Radiologist Triage

Annotation Type: DICOM Image Annotation — Polygon Masks, Bounding Boxes, Measurements

Dataset Size: 75,000+ annotated DICOM studies across 5 imaging modalities

About the Client

A Bengaluru-based teleradiology provider operating across 75+ hospitals processes over 3,000 diagnostic scans daily primarily X-rays and CTs serving patients across India around the clock.

With a team of highly specialised radiologists, experienced data scientists, and healthcare technologists, the provider delivers reads within 30 minutes for emergencies, 4 hours for urgent cases, and 24 hours for routine studies.

With ambitious plans to expand to 150+ facilities and process over 500,000 imaging studies annually, the provider operates in a high-stakes, time-critical environment where every delay in a preliminary finding directly impacts patient outcomes.

The Challenge

What Is Teleradiology and Why Does Annotation Quality Matter?

Teleradiology involves specialist radiologists interpreting medical images remotely, giving hospitals across India access to diagnostic expertise that geography would otherwise deny them.

Teleradiology AI models are only as reliable as the data they are trained on. A mislabeled pulmonary nodule in a training dataset doesn't produce a minor inaccuracy; it produces a model that misses nodules in production, on real patients. For a provider operating at 3,000 scans a day, annotation quality isn't a data engineering concern. It is a clinical one.

The Problem

A 40% year-over-year increase in imaging volume was placing unsustainable pressure on the radiologist team. Key operational challenges included:

- Inconsistent reporting quality: different specialists using different terminology and diagnostic thresholds across locations

- Limited specialist availability: complex cases requiring subspecialty reads with no reliable coverage model

- Turnaround time risk: 30-minute emergency SLAs under pressure as volume scaled

- Night coverage gaps: emergency cases arriving after hours with insufficient radiologist coverage

- No AI safety net: without preliminary detection models, every scan required full manual review

What Was at Stake

Without intervention, the consequences were concrete

- A delayed stroke CT at 2 am means a patient loses their treatment window.

- Failing emergency SLAs puts hospital contracts at risk;

- Scaling to 150 facilities without solving the workflow problem multiplies every inefficiency.

What Didn't Work

The team had attempted to build AI solutions before approaching Taskmonk. Two prior annotation vendors were engaged. Neither delivered:

- Inconsistent annotation formats: outputs returned as PDFs, flat image overlays, and unstructured bounding boxes are all incompatible with their PACS and AI pipelines

- No standard taxonomy: pathology labels varied by annotator and modality, making cross-study model training impossible

- Edge case failures: early-stage pulmonary nodules and subtle ischemic stroke signs were routinely mislabeled or skipped

- Slow resolution: issue turnaround averaged 5–7 days, stalling model development cycles

- Radiologist bandwidth: the clinical team had no capacity for large-scale labeling work on top of their read volume

The core problem wasn't annotation volume. It was precision, consistency, and workflow complexity across multiple imaging modalities, each requiring different tools, taxonomies, and QA logic.

The Solution

Taskmonk worked directly with the provider's radiologists and data scientists to design annotation pipelines specific to each modality and finding type. The implementation followed four deliberate phases.

Phase 1: Establishing the Foundation

- Modality: Chest X-ray (highest volume, most immediate clinical priority)

- Tooling: DICOM-native platform with window/level adjustment, full metadata preservation, polygon masks, length/area measurements

- Taxonomy: Standardised pathology labeling schema covering pneumonia, tuberculosis, and pleural effusion, enforced consistently across all annotators from day one

- Impact: Eliminated the terminology drift and format inconsistency that had undermined both previous vendor attempts

Phase 2: Building Quality Control Into the Workflow

- Consensus reviews: multiple radiologists independently labeling the same study before arbitration

- Blind reads: annotators working without visibility into prior labels

- Hierarchical review: junior-senior radiologist pairing at each QA stage

- Automated QA: real-time inter-annotator agreement tracking, disagreements surfaced at the image level across every batch

- Result: QA pipelines built directly into the workflow, not bolted on post-export

Phase 3: Model Training

- Dataset: Clean, consensus-validated DICOM annotations across chest X-ray modality

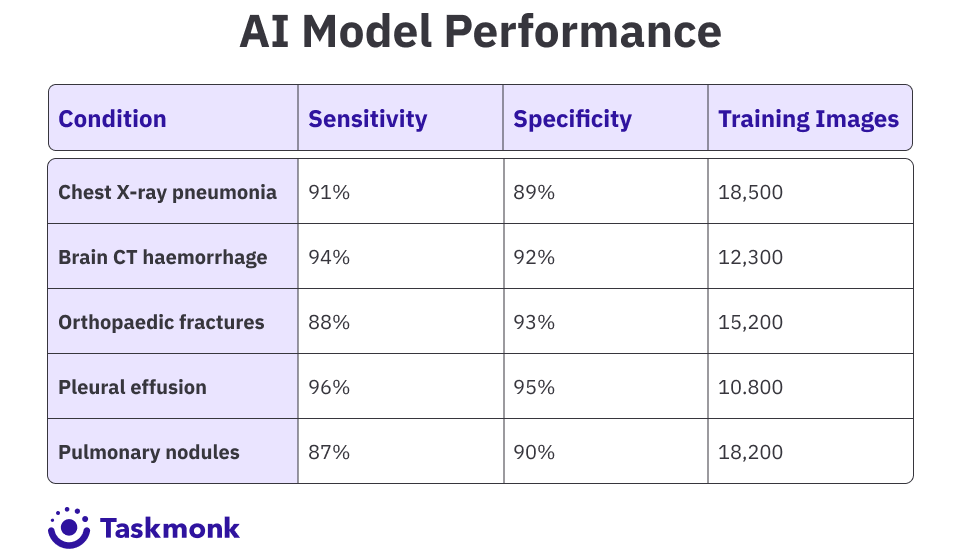

- Use cases: Five priority detection models: pneumonia, haemorrhage, fractures, pleural effusion, pulmonary nodules

- Format: Pre-annotated datasets structured for direct ingestion into the provider's AI training pipeline

Phase 4: Expanding Across Modalities

- Extended to: Brain CT, abdominal ultrasound, mammography, orthopaedic X-rays

- Configuration: Each modality is given its own annotation schema, QA thresholds, and review logic

- Setup: No-code workflow builder, new modality pipelines configured in days, no engineering effort required

- Visibility: Annotation progress tracked in real time across the provider's distributed team

- Support: Issues resolved within 24 hours throughout vs. 5–7 days with prior vendors

The Results

Annotation Output: 6 Months

- 75,000+ DICOM images annotated across multiple modalities

- 98.7% annotation accuracy validated through expert consensus review

- 12x increase in annotation throughput

- 85% decrease in annotation time per study

The provider absorbed a 40% growth in imaging volume without a proportional increase in headcount. AI-assisted prioritisation took over initial triage work that had previously demanded human coverage around the clock.

These are not research benchmarks. They are models running on live patient data, in production, across 75 hospitals, detecting findings that radiologists use to make treatment decisions.

Why Taskmonk

After struggling with two prior annotation vendors, the provider evaluated four platforms before selecting Taskmonk. The requirement was non-negotiable: a platform purpose-built for DICOM-native workflows, not retrofitted for them.

Built for Medical Imaging, Not Adapted for It

- Native DICOM architecture: full metadata preservation, WADO integration, annotation outputs compatible directly with PACS

- Radiologist-grade tooling: polygon masks, precise measurements, per-study window/level adjustment built in

- No format conversion: annotations flow directly into AI pipelines without data loss or manual re-import

Workflow Configurability Without Engineering Overhead

- No-code workflow builder: modality-specific pipelines configured in days, not sprints

- Configurable QA logic: different annotation schemas, thresholds, and review flows per modality

- Scales with the program: workflows evolved as coverage expanded from chest X-ray to five modalities without slowdown

Annotation Operations Managed End to End

- Affinity-based annotator routing: studies assigned to annotators with matching domain expertise, reducing error rates

- Distributed team management: annotation progress tracked in real time across all annotators and reviewers

- 24-hour support SLA: issues resolved within one business day throughout the engagement.

What’s Next

With a proven AI-assisted diagnostic platform now in production across 75 hospitals, the provider is expanding its capabilities to:

- Network expansion: scaling annotation and model coverage to 150+ facilities as the hospital network grows through 2026

- New modality coverage: extending AI detection to MRI studies and contrast-enhanced CT for more complex diagnostic use cases

- Subspecialty detection: developing models for cardiology, neurology, and oncology findings currently handled exclusively by specialist radiologists

- Automated reporting: moving from preliminary finding flagging to structured AI-generated draft reports for routine studies

Taskmonk continues to support ongoing model retraining, edge case annotation, and new detection class development as the provider's AI diagnostic capabilities mature.

Where previous tools were adapted for medical imaging, Taskmonk was built for it.